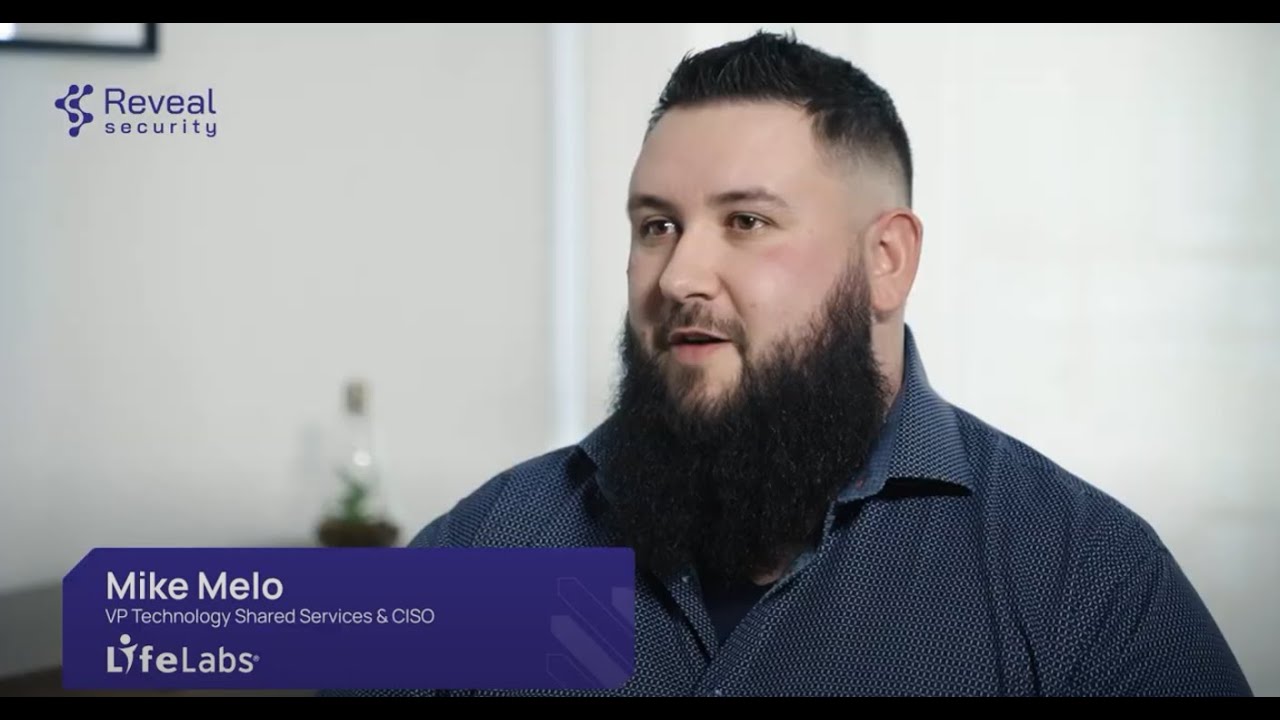

LifeLabs & Reveal Security

“I feel a lot more comfortable being able to sleep well knowing that our environments are protected… Reveal gives us an extremely accurate representation of how users and identities are interacting with our data and our applications systems”