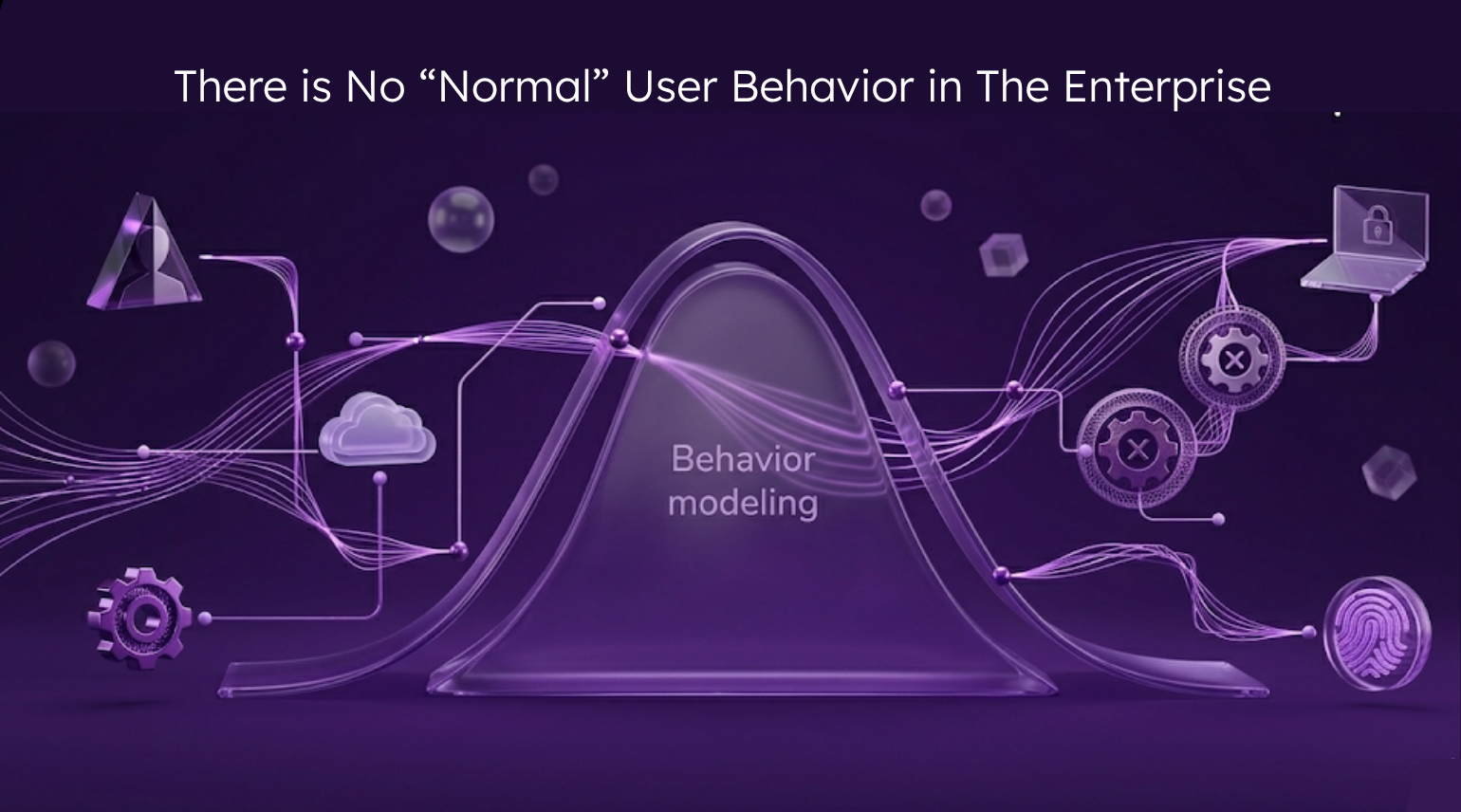

For years, UEBA tools have been built on a deceptively simple premise: establish a baseline of “normal” behavior, flag anything that deviates.

But “normal” implies statistical centrality — an average pulled from across your entire user population. But in a modern enterprise, that average belongs to no one. A DevOps engineer, a finance analyst, a contractor accessing cloud storage at 2am across three continents — none of them behave like each other, and none of them behave like the mean. When you try to abstract behavior to a single normal, you’re not detecting threats. You’re creating false positives.

And the problem has only gotten harder.

Today’s enterprise identity surface isn’t just human. It’s machine identities spinning up and tearing down infrastructure. It’s AI agents executing workflows autonomously. It’s service accounts with privileges that no person ever provisioned intentionally. The behavioral models that struggled to capture human variability were never built for this.

The right question was never “is this normal?” It was always “is this typical for this identity?”

Those are fundamentally different questions. Every identity has its own behavioral fingerprint, made up of not one pattern, but several. A developer might have two or three distinct “typicals” depending on the sprint cycle, the environment they’re working in, or the time of week. And when you zoom out, some identities share similar patterns – at Reveal, we call these clusters. Think of them as groups of similarly functioning employees or non-human identities, a layer of context that siphons out the false positives.

So yes, you can look for patterns in detection, but they need to be tailored to that specific identity, and informed by the broader group it belongs to.

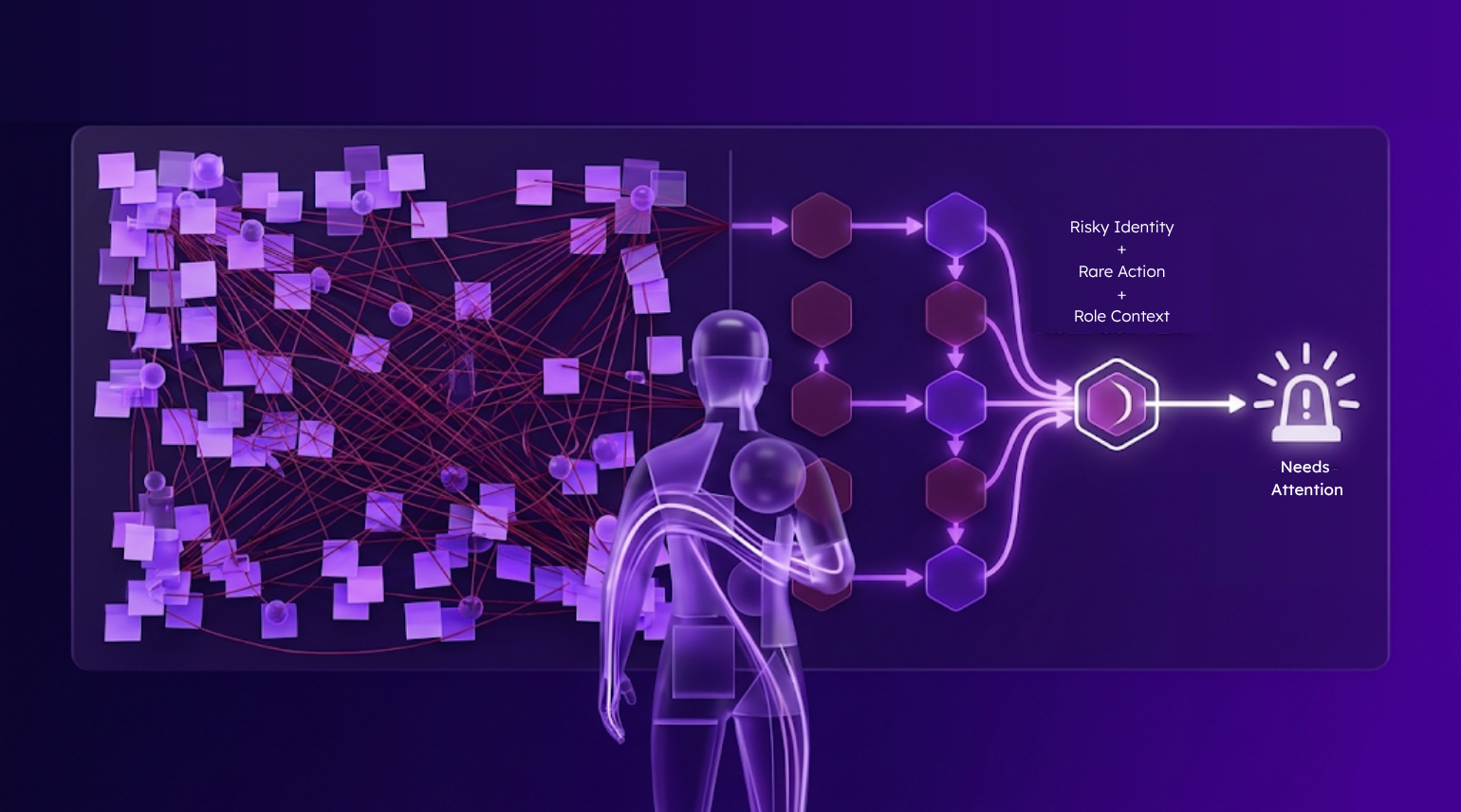

This is the core problem Reveal was built to solve. UEBA isn’t cutting it. Instead of rule-based, binary anomaly detection, where an action either crosses a threshold or it doesn’t, Reveal models typical behavior at the identity level. When something looks unusual, it doesn’t immediately fire an alert.

It asks a harder set of questions: How rare is this action for this identity? How sensitive is the resource being accessed? What could the potential attack path be from there? Are other identities with similar roles and permissions doing the same thing? What level of risk has this identity been operating at recently?

The answers feed into a single, weighted formula:

Anomalous behavior + sensitivity of security event + individual identity risk = detection.

And clustering makes that signal even sharper. If a particular identity is taking an anomalous action, but other similar identities behave that way frequently, it’s less likely to be malicious and more likely to be employee productivity.

This model is even more critical given the ease of downloading agents, their easy integration into core systems and data, and move towards autonomous execution.

Context and intent aren’t afterthoughts. They’re the mechanism for detection.

The result is a fundamentally different kind of signal; one that reflects the actual complexity of enterprise identity behavior, rather than forcing it into a statistical frame that was never built to contain it.

Reveal has an upcoming webinar featuring two identity attacks involving compromised credentials where every action looks legitimate. How can you detect between malicious activity and business productivity? Register here.