TL;DR

OpenTelemetry has made it easy to collect telemetry from AI agents, but most security teams have no idea what to actually do with that data once they have it. The default move is to send it to the SIEM, where it joins the rest of the log pile and gets evaluated against rules. This just isn’t good enough, and if you ask the ones looking at the logs and alerts, it never has been. Agentic AI threats live in sequences of events, not in any single one, and agent behavior is non-deterministic, which means there’s no signature to write an effective rule against.

The protection layer for AI agents needs three properties SIEMs don’t have: sequence reasoning, dynamically learned behavioral baselines, and machine-speed response. OpenTelemetry handles ingestion, while the SIEM handles storage. The intelligence layer in between is where the actual security work happens.

The Logs Are Easy. The Insight Is the Hard Part.

Agentic AI workflows generate enormous volumes of trace data. Every tool call, every reasoning step, every downstream API hit. OpenTelemetry has emerged as an obvious standardization layer for collecting all of it, and it’s a good one. It ingests well, it’s open source, it normalizes log formats, and it ships with a robust SDK and API library that makes integration easy.

If you’re rolling out agent observability, OpenTelemetry is the right choice, and you should use it. The hard part isn’t collection.

The hard part is what happens next. Most security teams I talk to have done the work of getting AI telemetry flowing through OpenTelemetry, and now they’re sitting on a growing pile of agent logs with no real plan for how to extract security value from them. The data is there. The, while the insight isn’t.

So the default playbook kicks in. Pipe it into the SIEM, write a few detection rules, hope something useful surfaces. That’s a reasonable starting move, but it’s not the solution. SIEMs are excellent at what they were built for, but they’re not equipped to handle what AI telemetry logs are bringing with them.

What’s Actually Changing: The Attack Surface Has Shifted

Before getting into where the SIEM falls short, it’s worth naming what’s changing underneath all of this.

As enterprises adopt agentic workflows, the attack surface is shifting from the perimeter to an insider threat. And this new insider threat is not what most security programs were built for. It is not a disgruntled employee downloading files before they quit. It is a behavioral journey: a complex, multistep execution path linking human intent with autonomous agent execution.

That chain is what your AI telemetry is actually showing you. It’s also what your detection layer needs to be able to see, reason about, and respond to. Once you accept that the behavior chain is the unit of risk, the limitations of the SIEM-plus-rules playbook get obvious fast.

Why Doesn’t a SIEM Work for Monitoring AI Agents?

Two structural reasons. Both of them apply just as much to the human identities in your environment as to the agents, which is part of the point.

Reason 1: SIEMs Reason About Events, Not Sequences

A SIEM looks at one event at a time and asks: does this match a known bad pattern? That model works well for discrete bad things like a malware signature, a known-bad IP, a failed login burst, or repeat of historical malicious trade craft.

But an agent’s threat profile isn’t in any single event. It’s in the sequence.

Picture an agent that authenticates legitimately. Queries Salesforce legitimately. Pulls from the data warehouse legitimately. Then exfiltrates to an unfamiliar endpoint. Every event passes the rules. The chain and its intent are the threat.

This is exactly the kind of behavior your OpenTelemetry data is capturing. Every step in that sequence is in the log stream. But a SIEM evaluating the stream event by event will see four normal-looking events, not one threat. The signal is in the relationship between the events, and the SIEM doesn’t reason at that layer.

The UEBA features bolted onto modern SIEMs baseline individual entities, but they don’t baseline all of the multi-step execution paths across human and agentic identities. They look at events with extra metadata. They don’t see the journey.

Context matters too. A software engineer doing impossible travel and posting sensitive content to the public web is a problem. A marketing person doing the same thing is at a conference live-tweeting the keynote. The SIEM can’t tell those apart, because it can’t reason about who is doing what, in what role, at what point in a sequence. That’s exactly what behavioral monitoring exists to provide.

Reason 2: Rules Can’t Keep Up With Non-Deterministic Agents

We think of traditional software as deterministic. Same input, same output. That’s the foundation signature-based detection (which is what most SIEM rules are) was built on. You know what the bad pattern looks like, you write a rule for it, and the rule fires when the pattern matches.

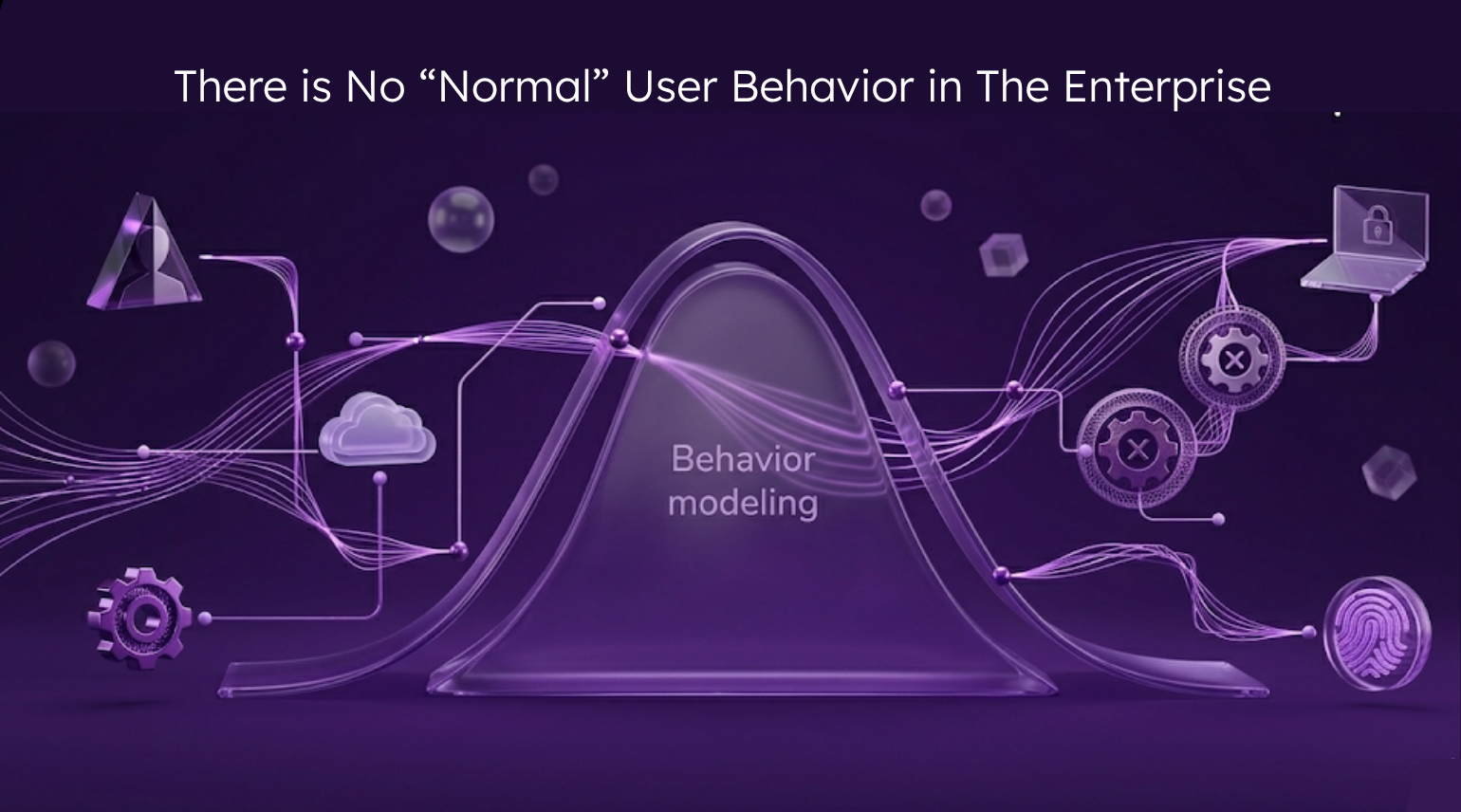

Agentic AI breaks that foundation. Ask the same agent the same question three times and you’ll get three different execution paths. The behavior is non-deterministic by design. There’s no signature to write a rule against, because the signature changes every time the agent runs.

This is where the OpenTelemetry pipeline starts working against you. The richer your agent telemetry gets, the more obvious it becomes that no static rule set can keep up with what the data is actually showing. Your detection engineers are going to spend their lives writing rules that catch the last attack pattern instead of the next one.

Now multiply that by machine speed. A non-deterministic system executing across twelve SaaS apps in four seconds isn’t a problem you solve by adding more rules. It needs a categorically different approach: detection by deviation from learned behavior, not by match against a known pattern. Practitioners sometimes call this signature-less alerting. The principle is simple. You can’t write down ahead of time every possible thing an agent could do. You can only learn what normal looks like, and surface the moments when reality drifts off course.

This is what UEBA was supposed to be a decade ago. The bar was set right. The technology of the time couldn’t clear it. The combination of cheap compute, modern unsupervised ML, and the urgency of agentic AI is what finally makes it possible.

What Should the Detection Layer for AI Agents Actually Do?

OpenTelemetry gives you the raw material: standardized, ingestible, normalized agent telemetry. The SIEM gives you a place to store it cheaply and run forensic queries against it after the fact. Both are necessary. Neither, on its own, is the detection layer.

What sits in between needs three properties:

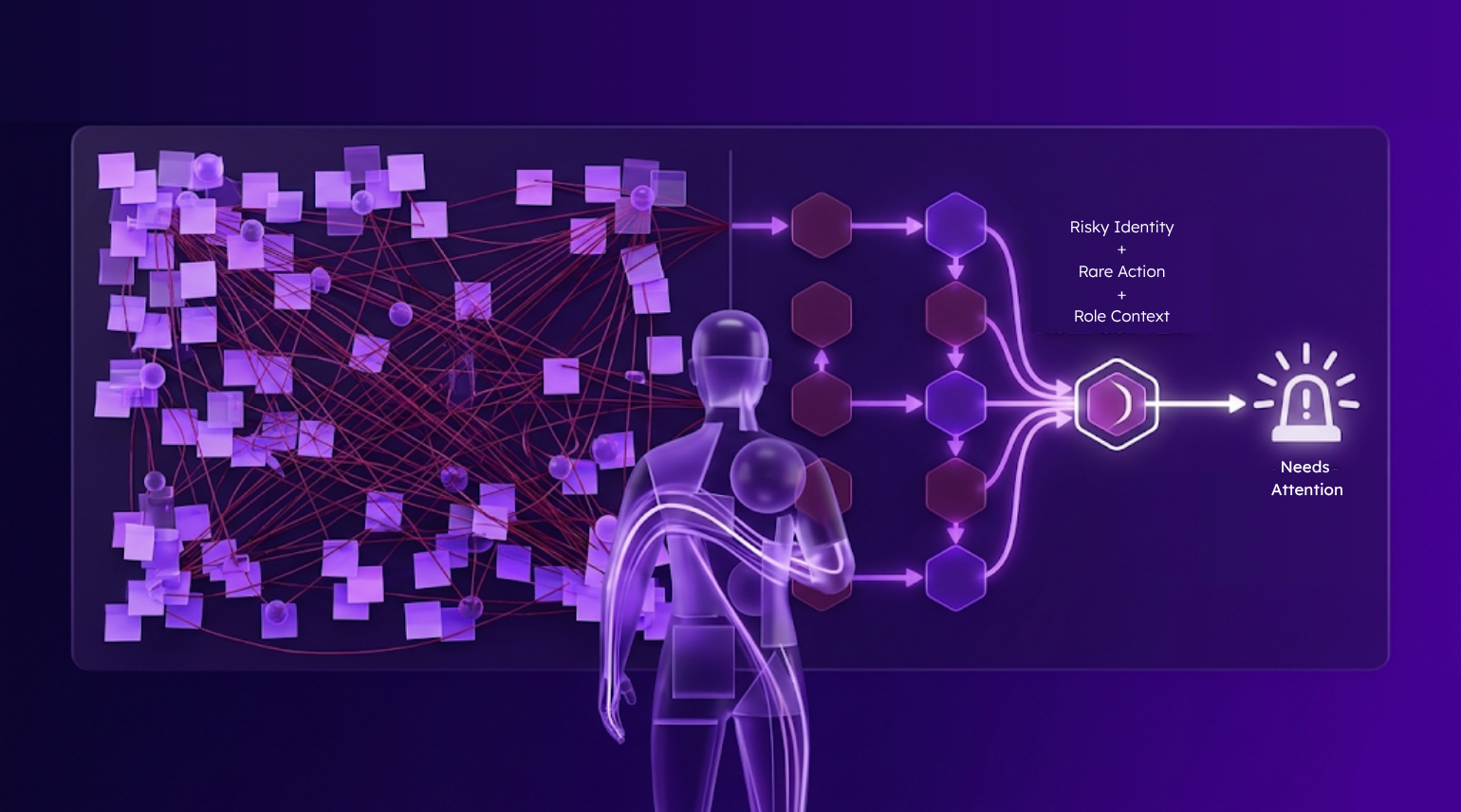

1. Sequence reasoning, not event reasoning. The unit of analysis has to be the behavioral chain, the full multi-step execution path linking a human’s intent to every downstream action an agent takes across every system it touches. If the detection layer can’t stitch that chain together, it’s still looking at the world one event at a time.

2. Dynamically learned behavior, not written rules. Every identity, whether human, agent, or service account, should have a behavioral baseline that the system learns continuously. Detection fires on deviation from that baseline, not on signature match. There’s no signature to write for a non-deterministic system, but there is a pattern to learn.

3. Machine-speed response. An agent can move through twelve systems in four seconds. Human-in-the-loop isn’t fast enough. The detection layer has to be wired directly to runtime response (terminate the session, revoke the token, lock the account) at the moment a chain crosses from productive work into a security-relevant deviation.

That’s the missing layer between OpenTelemetry and the SIEM. It’s the one that turns a growing pile of agent logs into something you can actually defend with.

The Common Shortcut: Stopping at Authentication

A tempting shortcut is making the rounds: just stop the agent at the front door. Restrict OAuth scopes. Block the AI tool at the proxy. Give the security team a feature flag for every connector.

That’s not nothing. You should do it, but it’s not a complete strategy.

For one, employees will use AI anyway. Closing the front door doesn’t stop adoption. It just moves it into the blind spot.

More importantly, the threat isn’t at the entry point. It’s what happens after authentication, when a legitimately authorized agent, running with inherited human permissions, starts doing something it was never supposed to do. By that point the OAuth scope is irrelevant. The behavior is what matters.

Three Things to Prioritize for AI Agent Security in 2026

If you own this problem in the next two quarters, here’s the short version:

1. Get the third-party SaaS surface under control. Credentials are the new perimeter. Every AI tool an employee has wired into Google Workspace or Microsoft 365 is part of your attack surface now.

2. Add a behavioral layer above wherever your logs live. Whether your data lands in a SIEM, a data lake, or somewhere else, you need a layer that reasons about sequences, learns identity behavior dynamically, and fires on deviation. That’s what turns OpenTelemetry data from raw exhaust into actionable intelligence.

3. Stop treating AI as its own problem. Agentic AI is inherited entitlement at machine speed. It behaves like a human identity behaves. Putting it in a separate box with separate tooling and a separate governance program means building two halves of the same capability and never reconciling them. Treat agents as identities, and the rest of the problem gets easier to solve.

Where Reveal Comes In

The architecture I’ve been describing throughout this blog is the architecture Reveal Security was built on. We sit between your telemetry sources (OpenTelemetry, SaaS logs, IdP logs, cloud logs) and your response surface, and we do the work of turning raw agent log data into a coherent behavioral chain you can actually act on.

Three things make this work in practice.

First, continuous behavioral observability. Reveal stitches the cross-system journey together so you see the full chain. From the moment a human launches an agent to every action that agent takes across every system it touches, the path is reconstructed into a single observable behavioral journey. The OpenTelemetry data you’ve been collecting stops being a pile of disconnected traces and starts being a story you can read.

Second, behavioral anomaly detection that doesn’t need rules. Reveal learns what normal looks like for every identity in the chain, human and agent, using unsupervised machine learning. When the execution path drifts into a high-risk anomaly sequence, we surface it. No signature to write. No rule set to maintain. The system gets smarter as your environment evolves, instead of going stale every time a new agent is deployed.

Third, machine-speed response actions. Detection without response is just better-organized noise. Reveal puts the alert, the analysis, and the action in one platform. When a behavioral deviation crosses your risk threshold, sessions terminate, tokens revoke, accounts lock, in real time, automatically or with human-in-the-loop oversight depending on how you’ve configured it.

OpenTelemetry is the standardization layer. The SIEM is the storage and forensics layer. Reveal is the intelligence layer in between. That’s where the real security work on agentic AI is going to live. By taking all of this into account and applying it, you are the team that stops the Vercel breach. The team that notices the Salesloft drift and throttles the attack. The team that keeps their logo out of posts that have the words like “breach” and “million dollar payout” in them. This is the solution, can you implement it before it’s your logo on the news?